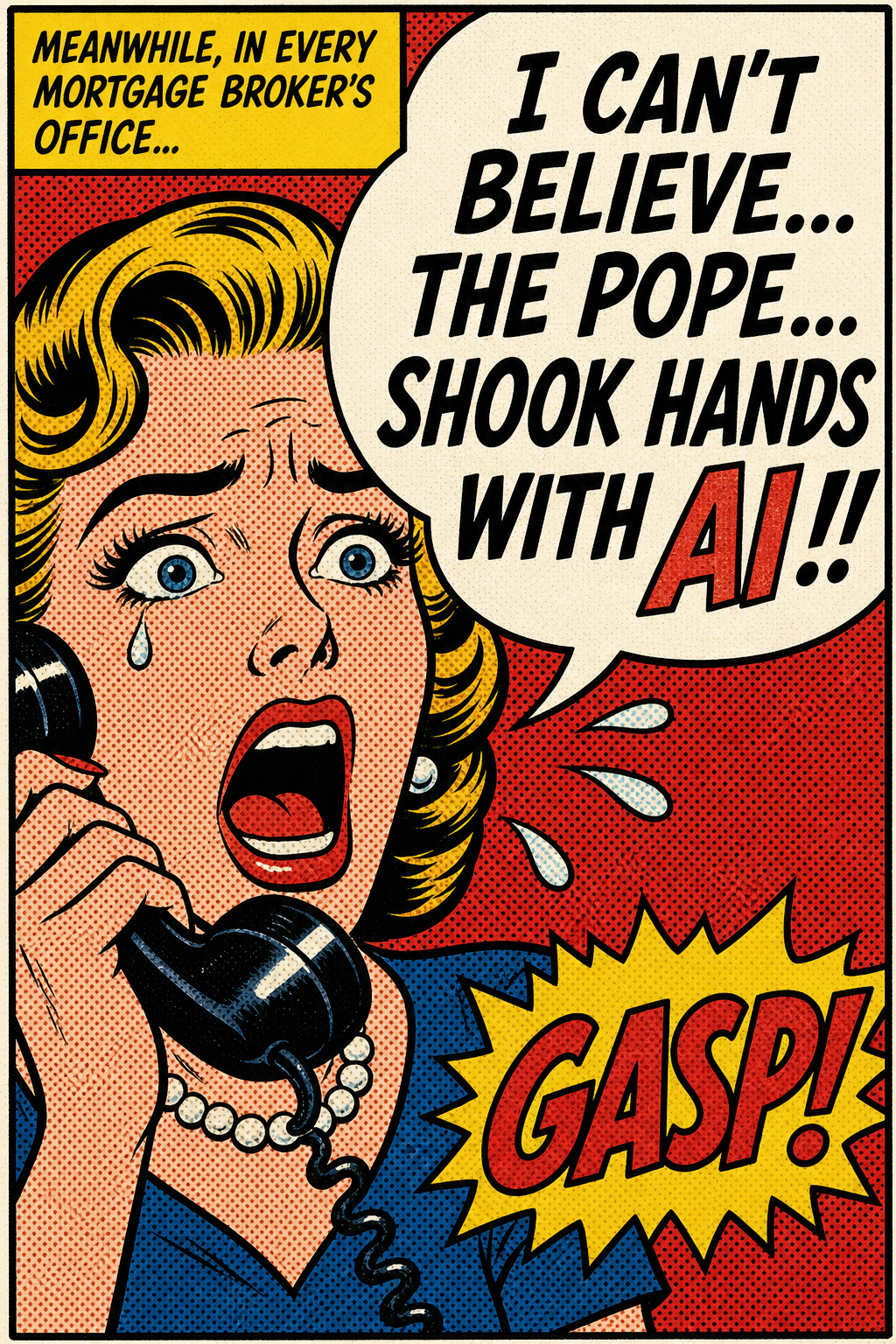

Pope Leo XIV's first encyclical dropped Monday 25 May. Title: "Magnifica Humanitas: On safeguarding the human person in the time of artificial intelligence." He presented it side-by-side with Chris Olah, co-founder of Anthropic (the makers of Claude). They shook hands. There are photos. Otto, we live in a comic book now.

$1.25B × 12 months = $15,000,000,000 a year.

That's roughly the GDP of Fiji, paid to Elon Musk, every 12 months, just for the privilege of thinking.

Anthropic's reported pre-money valuation on their $30B raise this week (Sequoia, Dragoneer, Greenoaks, Altimeter).

Anthropic's annualised enterprise revenue. Up 80× year-over-year. The "AI is hype" crowd has gone very quiet.

Of that revenue — more than a third — goes straight back out the door to SpaceX, just for compute. The labs literally cannot make enough chips fast enough.

Officially on the record this week. Said AI must "protect human dignity." Then shook hands with the people who built it.

Forget GPT-this and Claude-that for a second. Look at the shape of what just happened in 5 days:

Translation, Otto: the labs are now uncontested. Governments stepped back. The Church stepped forward. The chips can't keep up. And every business in the world has been put on notice — build with AI or get built over.

The Pope handshake isn't theology — it's a permission slip. The compute deal isn't business news — it's proof the labs will brute-force their way through any constraint. The $44B revenue isn't a benchmark — it's an existence proof that AI-native execution scales.

If you're still treating AI as a "feature" inside your existing org chart in late 2026, you have lost the plot. The winners this year are the ones rebuilding entire functions — CS, ops, underwriting, broking, marketing — around an AI counterpart. Not a chatbot. A counterpart.

Steve's been pressure-testing what an "AI counterpart per function" actually looks like at a real DTC brand. Production economics, in-house manufacturing crossovers, a Vatican-grade level of cap raise diligence. There's a billion-dollar idea cooking in here somewhere. We'll let you in when it's ready.

In the meantime: ask your team what they're still doing by hand that an agent could do tonight. Then ask why they're still doing it tomorrow.

— Steve (with Hermes on the artwork)

Educate & Entertain Otto — Issue 02 · 27 May 2026

Sources: SpaceX S-1 filing · Anthropic / Vatican press · Bloomberg · FT · TechCrunch · Wired.